As you might have noticed in the tablet information we've put some time and effort into imaging the next steps for the HUD. For the last few months I've been leading a team to bring that into a reality on the tablet.

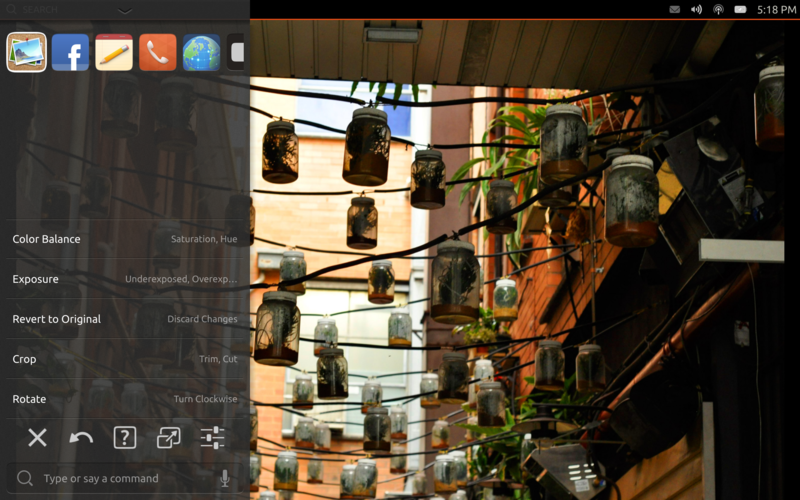

One of the problems facing application developers on a device like a tablet is adding functionality without making the entire interface feel cluttered. We even made the problem harder by emphasizing a content focused strategy in our SDK. Where do you put controls? There are various tools including toolbars, but none of them scale to even a moderately complex application. With the power of today's devices ever increasing, it's clear that tablet applications are going to become more complex.

We could just tell application authors not to do it. Save that complexity for your desktop UI. But that wouldn't be convergence, that'd be creating silos for you applications to live in.

By using HUD we allow applications to expose rich functionality that is available to the user via search. Users can search through the actions exposed by the application to find the functionality that they need. We combine that with historical usage and recently used items to take into account what functionality the user uses in the application. It's the rich functionality of your application, customized for the individual user.

One of the things we realized early in the HUD 2.0 efforts is that we can no longer just passively take data from applications and make things better. We needed to go beyond menu sucking to having applications actively targeting the HUD. We're building HUD functionality directly into the SDK making it easily available for application developers to add actions that are visible in the HUD.

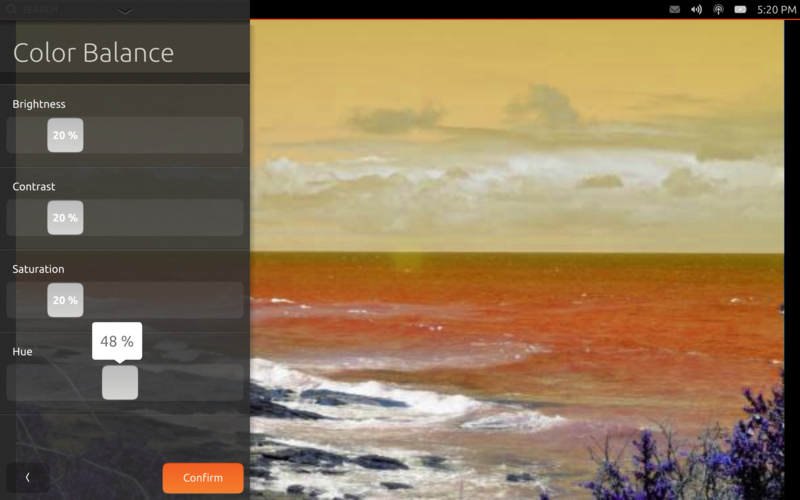

By providing a way for applications to export both actions and their descriptions to the HUD directly we can make that interaction much richer. We can do things like get the keywords pragmatically so that they're included next to the original item definitions. This also allows us to define actions that have additional properties that can be adjusted with UI elements, we're calling these parameterized actions.

Parameterized actions provide a way for applications to create a small autogenerated UI for simple settings and items that can be quick to edit. Let's be clear, autogenerated UI's aren't the best UI's, but if done right can be attractive and effective. We aim to do it right. Hopefully your application has a primary UI that is beautiful and tailored for its specific task that is then supplemented with additional settings and actions in the HUD, parameterized actions are no different there.

Currently in the code we only have support for sliders of integer percentages. That's pretty limiting. We plan on expanding that to most of the base widgets in the toolkit.

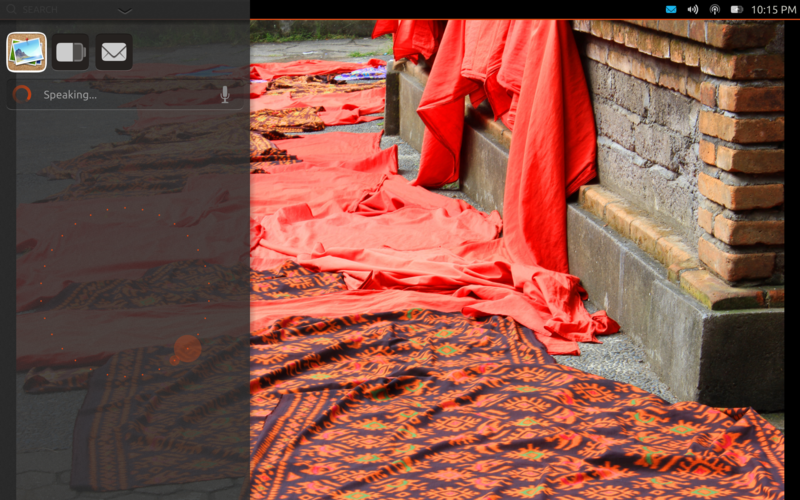

While talking to yourself makes you seem crazy, talking to your tablet is just cool. With the HUD we realized that we had a relatively small data set, and so it would be possible to get reasonable voice recognition using the resources available in the device. That makes a great way to interact with an application, keyboards are chunky on any handheld device (but needed for when you're supposed to be paying attention to the person talking) and voice makes interacting much more fluid.

We built the voice feature around two different Open Source voice engines: Pocket Sphinx and Julius. While we started with Pocket Sphinx we weren't entirely happy with it's performance, and found Julius to start faster and provide better results. Unfortunately Julius is licensed with the 4-clause BSD license, putting it in multiverse and making it so that we can't link to it in the Ubuntu archive version of HUD. We're looking at ways to make it so that people who do want to install it from multiverse can easily use Julius, but what we'd really like is to make the Pocket Sphinx support really great. It's something we'd love help with. We're not voice experts, but some of you might be, let's make the distributable free software solution the best solution.

When we did user testing of the first version of the HUD one of the biggest problems users had was composing a search in the terms used in the applications. It turns out users search for "Send E-mail" instead of "Compose New Message". I'm sure there are even some people who want to "Clear History" but others want to "Delete" it. To help this situation we've introduced keywords that can be added as a sidecar file to legacy applications, and defined directly for libhud exported actions. These can then be searched for as well, increasing the ability of application authors to provide different ways to express the same action.

One of the issues that the HUD has in general is discoverability. How do I know that this cool new app I downloaded can do color balancing? Does every app need to run a Superbowl ad to make consumers realize their features? We've got some ideas, but come and share yours on the unity-design mailing list.

While the source is published, we still don't have beautiful documentation and developer help out there yet. We're working on it. You're welcome to look at the source code, or just hang tight, I'll have another blog post soon.

posted Feb 21, 2013 | permanent link